Weather Anomalies

Climate change and temperature anomalies

If we wanted to study climate change, we can find data on the Combined Land-Surface Air and Sea-Surface Water Temperature Anomalies in the Northern Hemisphere at NASA’s Goddard Institute for Space Studies. The tabular data of temperature anomalies can be found here

To define temperature anomalies you need to have a reference, or base, period which NASA clearly states that it is the period between 1951-1980.

Run the code below to load the file:

weather <-

read_csv("https://data.giss.nasa.gov/gistemp/tabledata_v3/NH.Ts+dSST.csv",

skip = 1,

na = "***")Notice that, when using this function, we added two options: skip and na.

- The

skip=1option is there as the real data table only starts in Row 2, so we need to skip one row. na = "***"option informs R how missing observations in the spreadsheet are coded. When looking at the spreadsheet, you can see that missing data is coded as "***". It is best to specify this here, as otherwise some of the data is not recognized as numeric data.

Once the data is loaded, notice that there is an object titled weather in the Environment panel. If you cannot see the panel (usually on the top-right), go to Tools > Global Options > Pane Layout and tick the checkbox next to Environment. Click on the weather object, and the dataframe will pop up on a seperate tab. Inspect the dataframe.

For each month and year, the dataframe shows the deviation of temperature from the normal (expected). Further the dataframe is in wide format.

You have two objectives in this section:

Select the year and the twelve month variables from the

weatherdataset. We do not need the others (J-D, D-N, DJF, etc.) for this assignment. Hint: useselect()function.Convert the dataframe from wide to ‘long’ format. Hint: use

gather()orpivot_longer()function. Name the new dataframe astidyweather, name the variable containing the name of the month asmonth, and the temperature deviation values asdelta.

weather <- weather %>%

select(Year, Jan, Feb, Mar, Apr, May, Jun, Jul, Aug, Sep, Oct, Nov, Dec)

tidyweather <- pivot_longer(weather, cols = 2:13,

names_to = "month",

values_to = "delta"

)Inspect your dataframe. It should have three variables now, one each for

- year,

- month, and

- delta, or temperature deviation.

Plotting Information

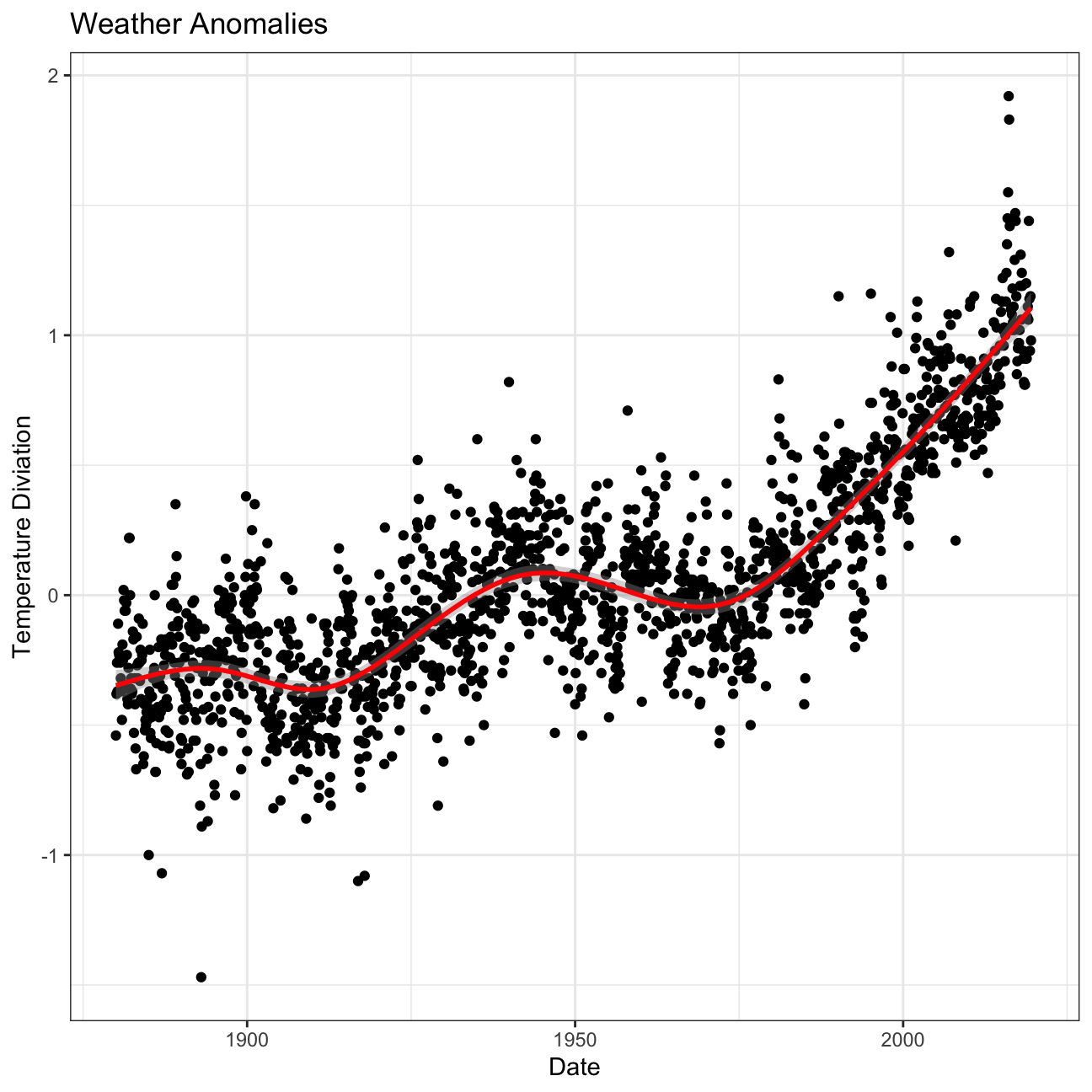

Let us plot the data using a time-series scatter plot, and add a trendline. To do that, we first need to create a new variable called date in order to ensure that the delta values are plot chronologically.

In the following chunk of code, I used the

eval=FALSEargument, which does not run a chunk of code; I did so that you can knit the document before tidying the data and creating a new dataframetidyweather. When you actually want to run this code and knit your document, you must deleteeval=FALSE, not just here but in all chunks wereeval=FALSEappears.

tidyweather <- tidyweather %>%

mutate(date = ymd(paste(as.character(Year), month, "1")),

month = month(date, label=TRUE),

year = year(date))

ggplot(tidyweather, aes(x=date, y = delta))+

geom_point()+

geom_smooth(color="red") +

theme_bw() +

labs (

title = "Weather Anomalies",

x= "Date",

y= "Temperature Diviation"

)

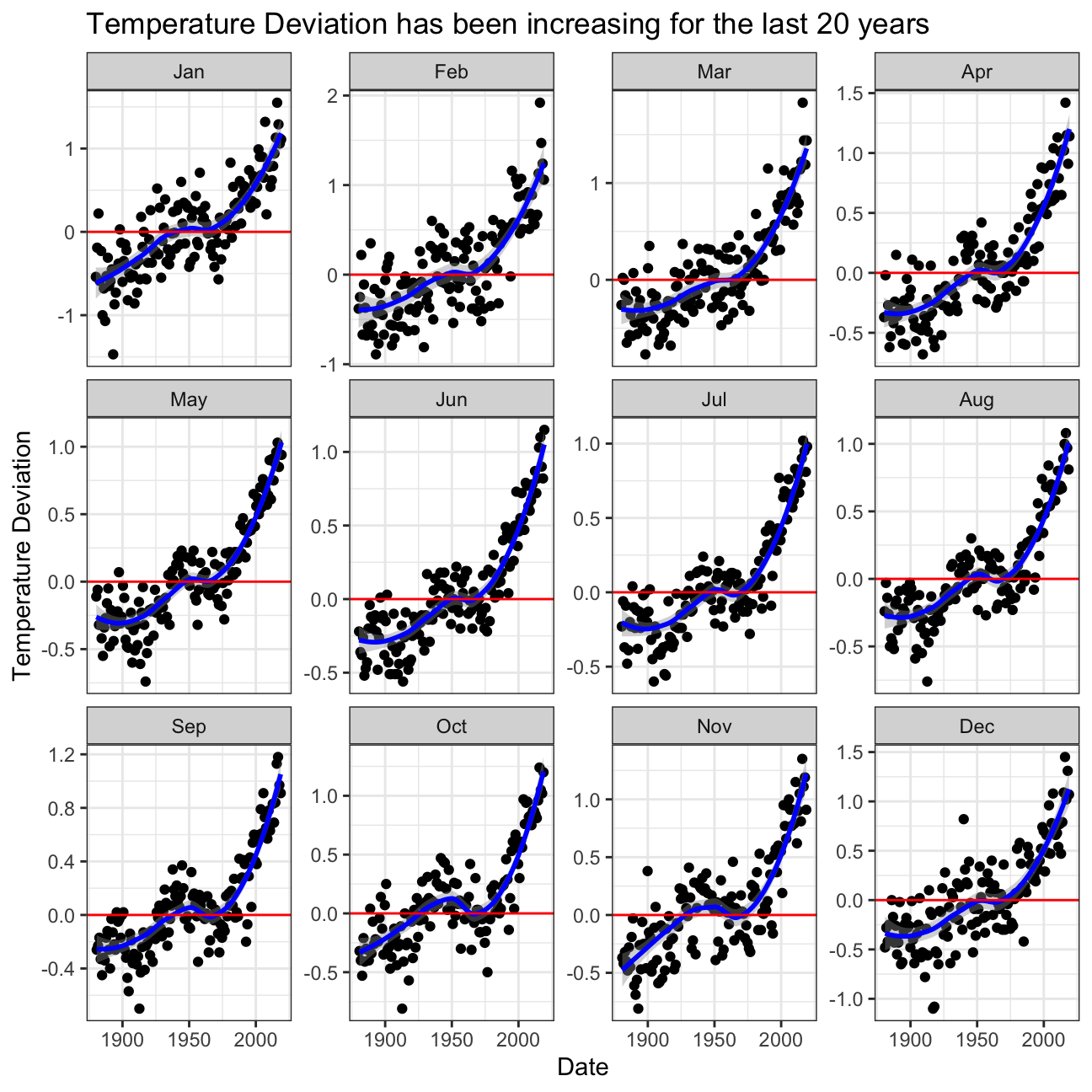

Is the effect of increasing temperature more pronounced in some months? Use facet_wrap() to produce a seperate scatter plot for each month, again with a smoothing line. Your chart should have human-readable labels; that is, each month should be labeled “Jan”, “Feb”, “Mar” (full or abbreviated month names are fine), not 1, 2, 3.

p1 <- ggplot(tidyweather,

aes(x=date,

y=delta))+

geom_point()+

geom_smooth(color="blue")+

theme_bw()+

facet_wrap(~ month, scales="free_y")+

labs(title="Temperature Deviation has been increasing for the last 20 years",

x="Date",

y="Temperature Deviation")

#adding a horizontal line to see how far off our delta is from the expected value

p1+ geom_hline(yintercept=0, color="red")+

NULL

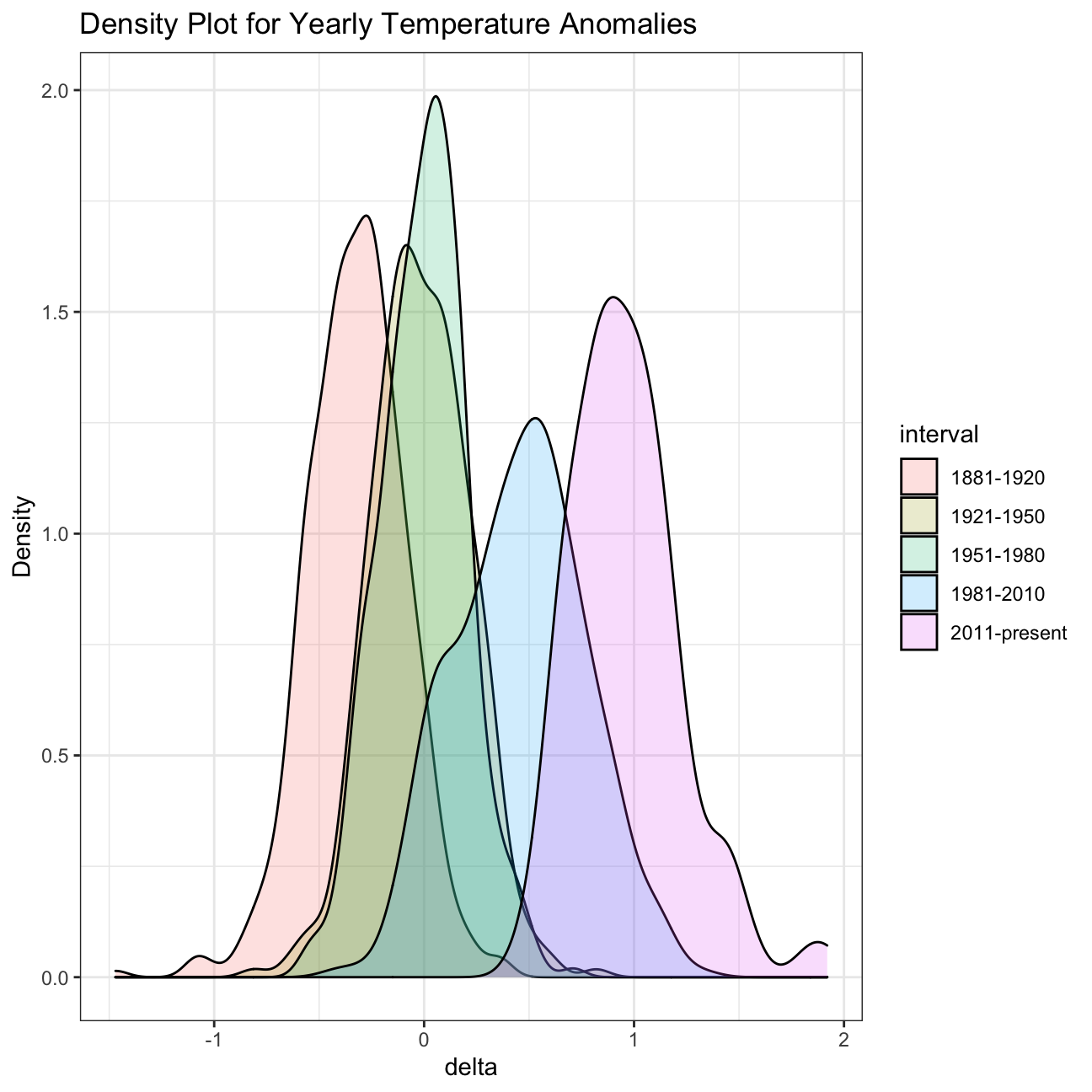

It is sometimes useful to group data into different time periods to study historical data. For example, we often refer to decades such as 1970s, 1980s, 1990s etc. to refer to a period of time. NASA calculates a temperature anomaly, as difference from the base period of 1951-1980. The code below creates a new data frame called comparison that groups data in five time periods: 1881-1920, 1921-1950, 1951-1980, 1981-2010 and 2011-present.

We remove data before 1800 and before using filter. Then, we use the mutate function to create a new variable interval which contains information on which period each observation belongs to. We can assign the different periods using case_when().

comparison <- tidyweather %>%

filter(Year>= 1881) %>% #remove years prior to 1881

#create new variable 'interval', and assign values based on criteria below:

mutate(interval = case_when(

Year %in% c(1881:1920) ~ "1881-1920",

Year %in% c(1921:1950) ~ "1921-1950",

Year %in% c(1951:1980) ~ "1951-1980",

Year %in% c(1981:2010) ~ "1981-2010",

TRUE ~ "2011-present"

))Inspect the comparison dataframe by clicking on it in the Environment pane.

Now that we have the interval variable, we can create a density plot to study the distribution of monthly deviations (delta), grouped by the different time periods we are interested in. Set fill to interval to group and colour the data by different time periods.

#intervals

ggplot(comparison, aes(x=delta, fill=interval))+

geom_density(alpha=0.2) + #density plot with tranparency set to 20%

theme_bw() + #theme

labs (

title = "Density Plot for Yearly Temperature Anomalies",

y = "Density" #changing y-axis label to sentence case

)

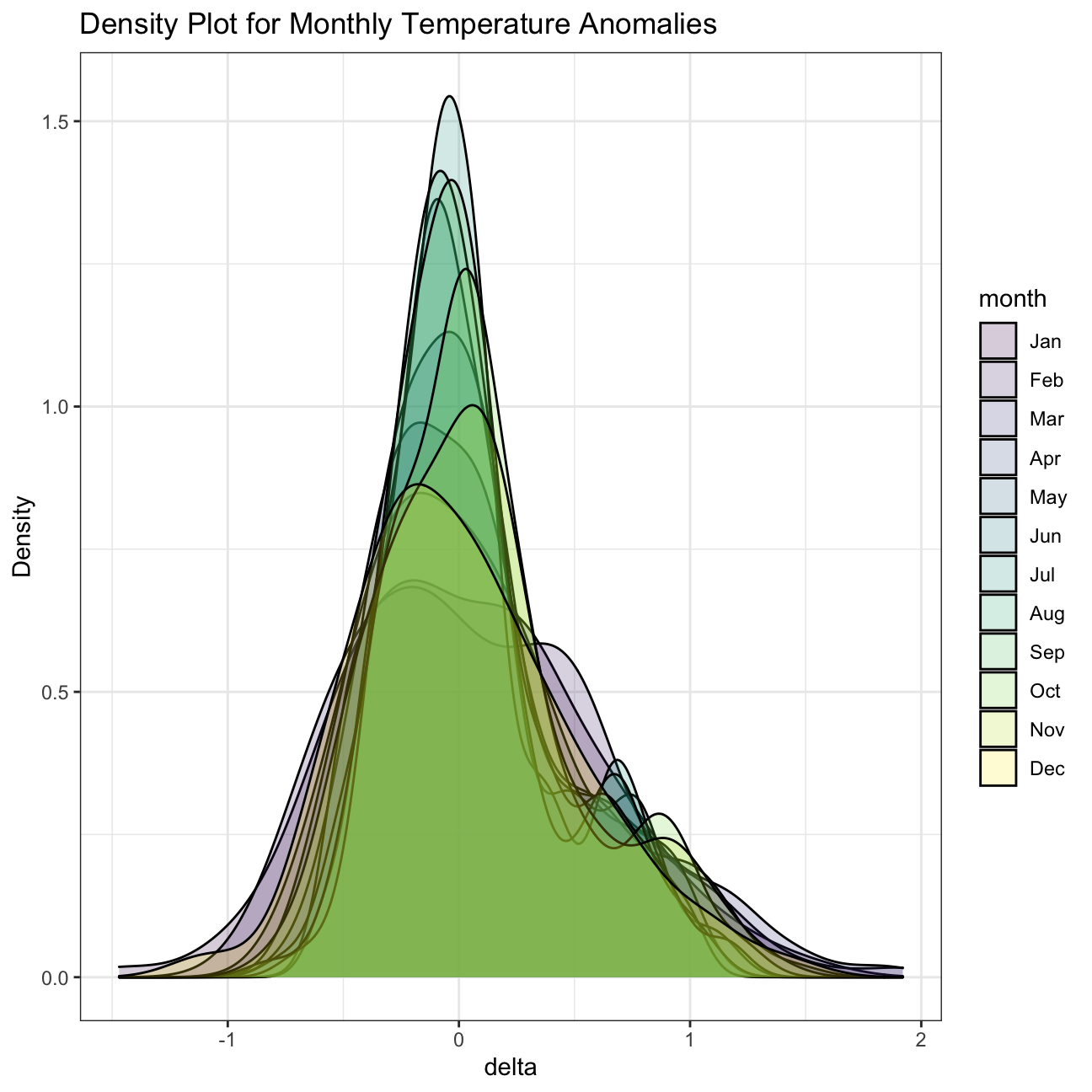

#month

ggplot(comparison, aes(x=delta, fill=month))+

geom_density(alpha=0.2) + #density plot with tranparency set to 20%

theme_bw() + #theme

labs (

title = "Density Plot for Monthly Temperature Anomalies",

y = "Density" #changing y-axis label to sentence case

)

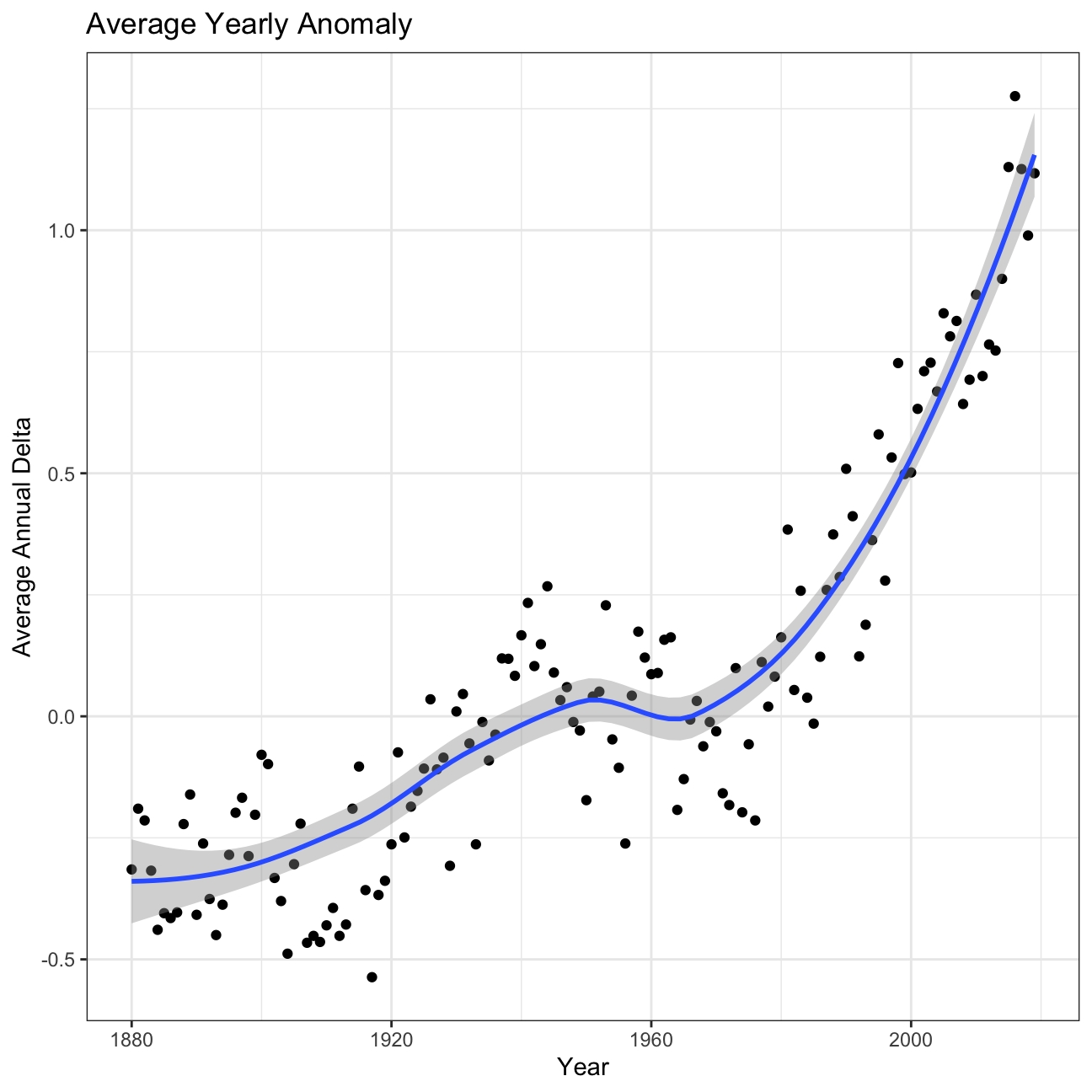

So far, we have been working with monthly anomalies. However, we might be interested in average annual anomalies. We can do this by using group_by() and summarise(), followed by a scatter plot to display the result.

#creating yearly averages

average_annual_anomaly <- tidyweather %>%

group_by(Year) %>% #grouping data by Year

# creating summaries for mean delta

# use `na.rm=TRUE` to eliminate NA (not available) values

summarise(annual_average_delta = mean(delta, na.rm=TRUE))

#plotting the data:

ggplot(average_annual_anomaly, aes(x=Year, y= annual_average_delta))+

geom_point()+

#Fit the best fit line, using LOESS method

geom_smooth() +

#change to theme_bw() to have white background + black frame around plot

theme_bw() +

labs (

title = "Average Yearly Anomaly",

y = "Average Annual Delta"

)

Confidence Interval for delta

NASA points out on their website that

A one-degree global change is significant because it takes a vast amount of heat to warm all the oceans, atmosphere, and land by that much. In the past, a one- to two-degree drop was all it took to plunge the Earth into the Little Ice Age.

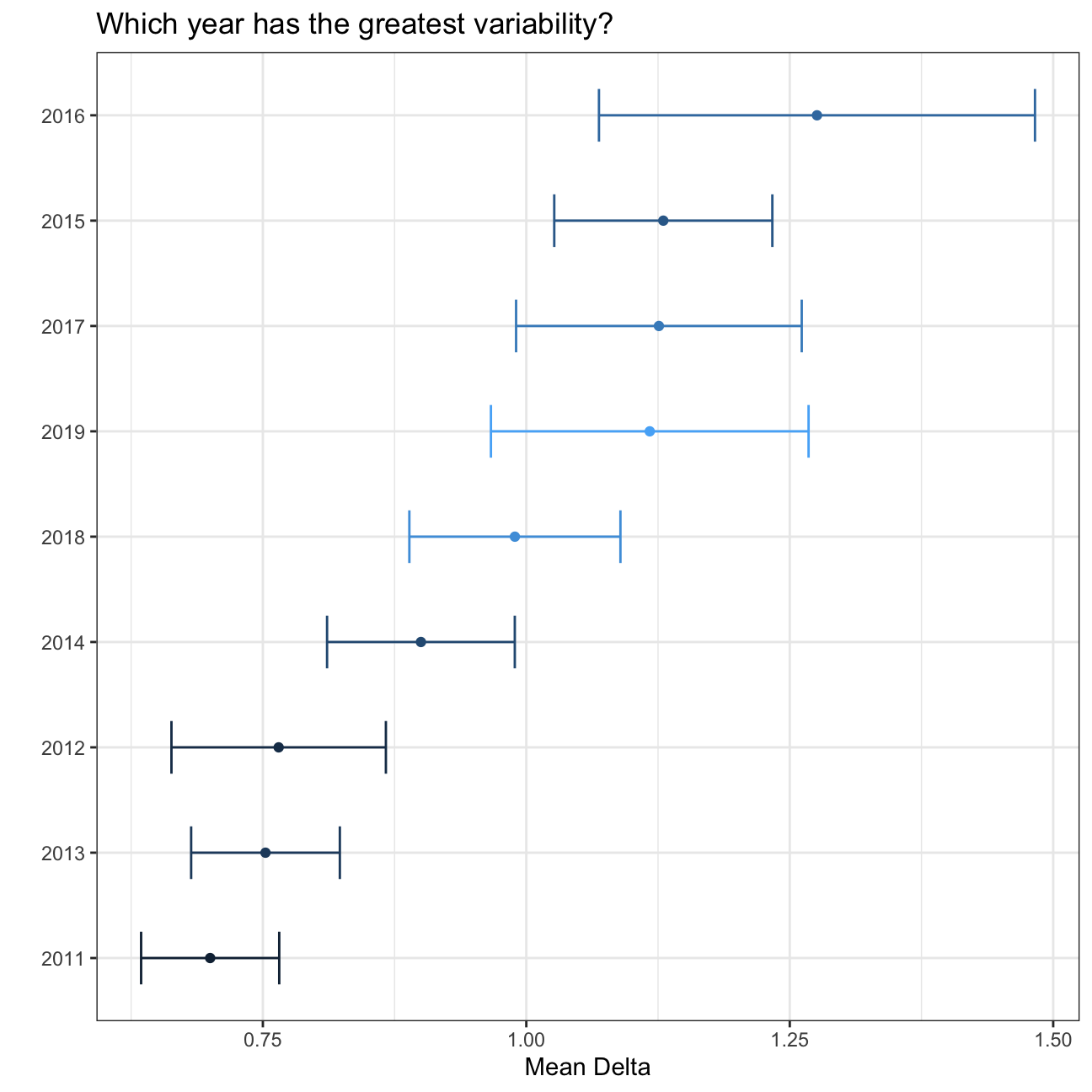

Your task is to construct a confidence interval for the average annual delta since 2011, both using a formula and using a bootstrap simulation with the infer package. Recall that the dataframe comparison has already grouped temperature anomalies according to time intervals; we are only interested in what is happening between 2011-present.

formula_ci <- comparison %>%

filter(interval=="2011-present", !is.na(delta)) %>%

group_by(Year) %>%

summarise(min_delta=min(delta),

mean_delta=mean(delta),

median_delta=median(delta),

max_delta=max(delta),

sd_delta=sd(delta),

count=n(),

# get t-critical value with (n-1) degrees of freedom

t_critical = qt(0.975, count-1),

se_delta = sd(delta)/sqrt(count),

margin_of_error = t_critical * se_delta,

delta_low = mean_delta - margin_of_error,

delta_high = mean_delta + margin_of_error

)

# choose the interval 2011-present

# what dplyr verb will you use?

# calculate summary statistics for temperature deviation (delta)

# calculate mean, SD, count, SE, lower/upper 95% CI

# what dplyr verb will you use?

#print out formula_CI

formula_ci## # A tibble: 9 x 12

## Year min_delta mean_delta median_delta max_delta sd_delta count t_critical

## <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <int> <dbl>

## 1 2011 0.54 0.7 0.685 0.87 0.103 12 2.20

## 2 2012 0.47 0.765 0.81 1.01 0.160 12 2.20

## 3 2013 0.65 0.753 0.735 1.05 0.111 12 2.20

## 4 2014 0.67 0.9 0.885 1.14 0.140 12 2.20

## 5 2015 0.9 1.13 1.13 1.45 0.163 12 2.20

## 6 2016 1.02 1.28 1.10 1.92 0.326 12 2.20

## 7 2017 0.85 1.13 1.08 1.47 0.213 12 2.20

## 8 2018 0.81 0.989 0.925 1.24 0.158 12 2.20

## 9 2019 0.94 1.12 1.11 1.44 0.163 7 2.45

## # … with 4 more variables: se_delta <dbl>, margin_of_error <dbl>,

## # delta_low <dbl>, delta_high <dbl>ggplot(formula_ci, aes(x=reorder(Year,mean_delta), y=mean_delta, colour=Year)) +

geom_point() +

geom_errorbar(width=.5, aes(ymin=delta_low, ymax=delta_high)) +

labs(x=" ",

y= "Mean Delta",

title="Which year has the greatest variability?") +

theme_bw()+

coord_flip()+

theme(legend.position = "none")+

NULL

#Confidence Interval for average annual delta over the whole period of 2011-present

formula_ci_interval <- comparison %>%

filter(interval=="2011-present", !is.na(delta)) %>%

summarise(min_delta=min(delta),

mean_delta=mean(delta),

median_delta=median(delta),

max_delta=max(delta),

sd_delta=sd(delta),

count=n(),

# get t-critical value with (n-1) degrees of freedom

t_critical = qt(0.975, count-1),

se_delta = sd(delta)/sqrt(count),

margin_of_error = t_critical * se_delta,

delta_low = mean_delta - margin_of_error,

delta_high = mean_delta + margin_of_error

)

#print out formula_CI_interval

formula_ci_interval## # A tibble: 1 x 11

## min_delta mean_delta median_delta max_delta sd_delta count t_critical se_delta

## <dbl> <dbl> <dbl> <dbl> <dbl> <int> <dbl> <dbl>

## 1 0.47 0.966 0.94 1.92 0.262 103 1.98 0.0259

## # … with 3 more variables: margin_of_error <dbl>, delta_low <dbl>,

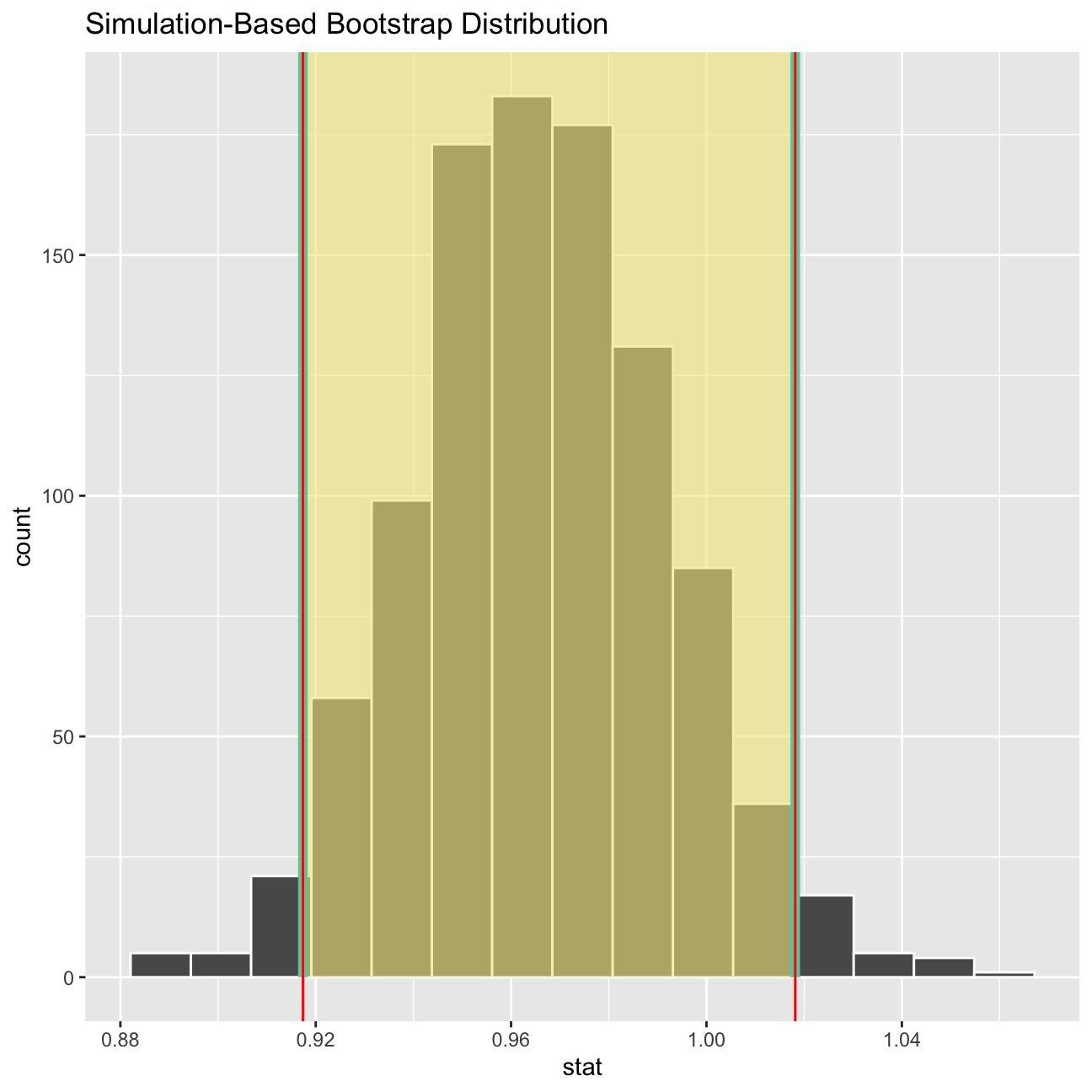

## # delta_high <dbl># use the infer package to construct a 95% CI for delta

set.seed(1234)

#bootstrap for mean delta

boot_delta <- comparison %>%

filter(interval=="2011-present", !is.na(delta)) %>%

# Specify the variable of interest

specify(response = delta) %>%

# Generate a bunch of bootstrap samples

generate(reps = 1000, type = "bootstrap") %>%

# Find the median of each sample

calculate(stat = "mean")

percentile_ci <- boot_delta %>%

get_confidence_interval(level = 0.95, type = "percentile")

percentile_ci## # A tibble: 1 x 2

## lower_ci upper_ci

## <dbl> <dbl>

## 1 0.917 1.02mean_delta <- ggplot(boot_delta, aes(x = stat)) +

geom_histogram() +

labs(title= "Bootstrap distribution of means",

x = "Mean delta per bootstrap sample",

y = "Count") +

geom_vline(xintercept = percentile_ci$lower_ci, colour = 'orange', size = 2, linetype = 2) +

geom_vline(xintercept = percentile_ci$upper_ci, colour = 'orange', size = 2, linetype = 2)

visualize(boot_delta) +

shade_ci(endpoints = percentile_ci,fill = "khaki")+

geom_vline(xintercept = percentile_ci$lower_ci, colour = "red")+

geom_vline(xintercept = percentile_ci$upper_ci, colour = "red")

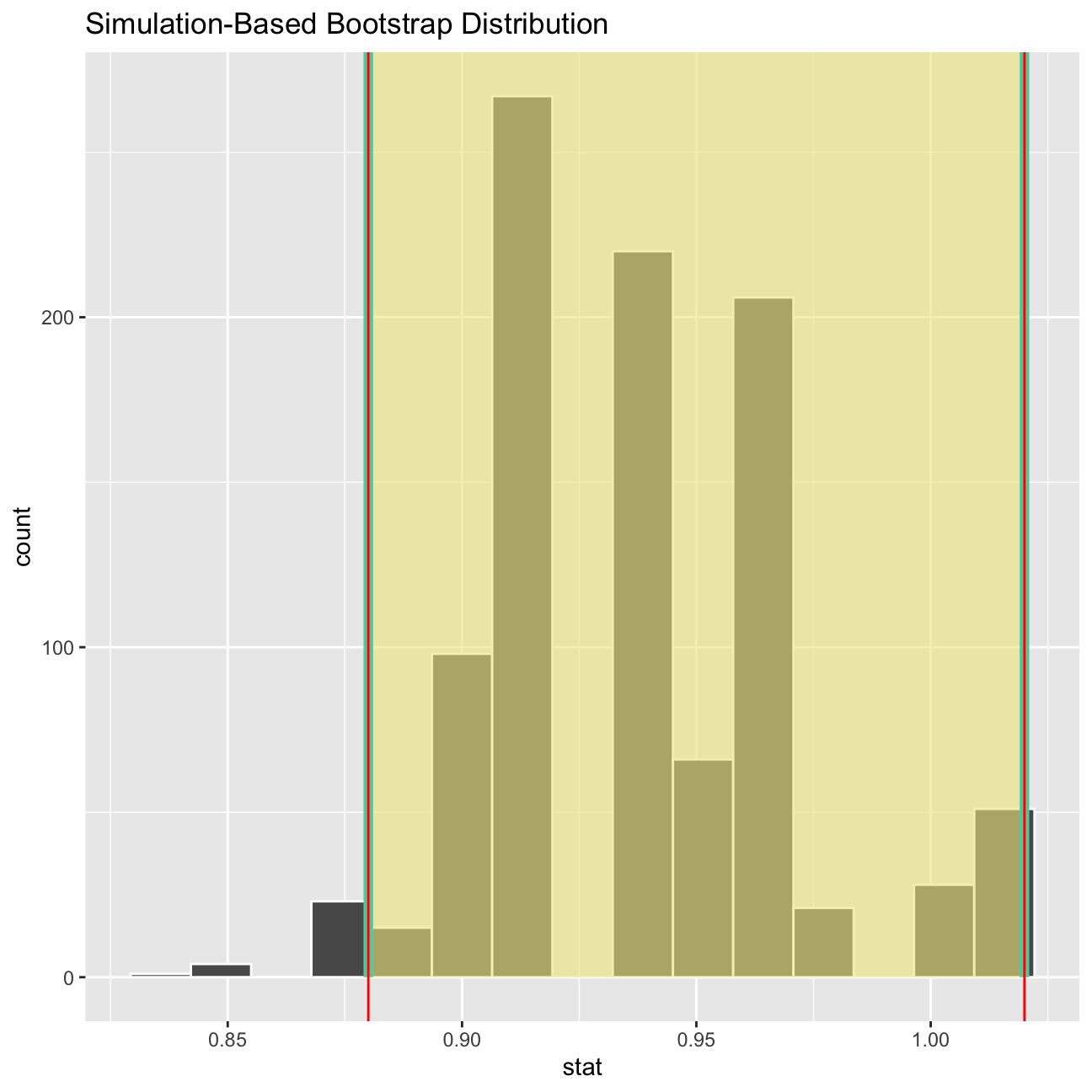

# bootstrap for MEDIAN delta

boot_med_delta <- comparison %>%

# Select 2-bedroom flat

filter(interval=="2011-present") %>%

# Specify the variable of interest

specify(response = delta) %>%

# Generate a bunch of bootstrap samples

generate(reps = 1000, type = "bootstrap") %>%

# Find the median of each sample

calculate(stat = "median")

percentile_med_ci <- boot_med_delta %>%

get_ci(level = 0.95, type = "percentile")

median_delta <- ggplot(boot_delta, aes(x = stat)) +

geom_histogram() +

labs(title= "Bootstrap distribution of medians",

x = "Median delta per bootstrap sample",

y = "Count") +

geom_vline(xintercept = percentile_ci$lower_ci, colour = 'orange', size = 2, linetype = 2) +

geom_vline(xintercept = percentile_ci$upper_ci, colour = 'orange', size = 2, linetype = 2)

visualize(boot_med_delta) +

shade_ci(endpoints = percentile_med_ci,fill = "khaki")+

geom_vline(xintercept = percentile_med_ci$lower_ci, colour = "red")+

geom_vline(xintercept = percentile_med_ci$upper_ci, colour = "red")

What is the data showing us? Please type your answer after (and outside!) this blockquote. You have to explain what you have done, and the interpretation of the result. One paragraph max, please!

Bootstrapping is a nonparametric method which lets us compute estimated standard errors, confidence intervals and hypothesis testing. We resampled a given data set a specified number of times (1000) and calculate a specific statistic from each sample (once median and once mean). From there we can see with what certainty we hit the numbers.